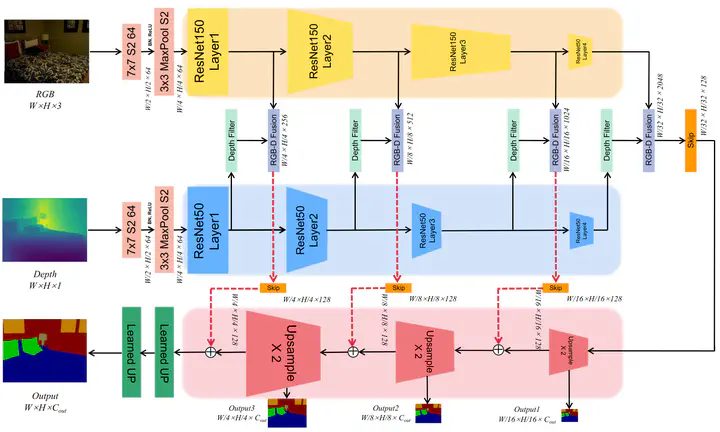

ADNet: Asymmetric Dual-mode Network for RGB-D Indoor Scene Parsing

Abstract

Indoor scene parsing is a crucial and fundamental task in the field of robotics. In recent years, semantic segmentation based on RGB images and depth images have achieved excellent performance. However, the quality of depth images are not high, and often have noise or information loss. The spatial information that contained in the depth images is not in the same mode as the color information in the RGB images, which cannot be simply overlapped to segment. The limitation can lead to unsatisfactory segmentation results. To overcome this limitation, we propose Asymmetric Dual-mode Network (ADNet) to fuse color information and spatial information more efficiently. The core of ADNet is a shallow network for extracting spatial information in depth images, which can improve the utilization of the network. In addition, Depth Filter (DF) operator is added to the network to optimize the spatial information and reduce the effect of noise on segmentation. We evaluate our ADNet on the common indoor dataset NYUv2 and compare it with the approach of state-of-the-art on this dataset, our proposed model has a competitive performance.

Supplementary notes can be added here, including code, math, and images.